Introduction

In a sensorimotor control system, sensory information affects body movement. Complex environments require humans to have good perceptive functions providing many details (Castiello, 2005). Several studies have revealed that visual, auditory, and olfactory systems (Castiello et al., 2006; Tubaldi et al., 2008) play important roles in the sensorimotor system. In the visual system’s regulation of the sensorimotor system, there is a distinction between perception and behavior. In other words, both perception of visual information and execution of motor control are involved. Schmidt and Lee explained two different loops and discussed the role of feedback regulation (Schmidt and Lee, 2005). In the motor skill learning and controlling procedure, an actuator can receive sensory information and send to an effector, which is called open loop. A closed loop can correct the errors by visual feedback. There is a controller comparing the difference between the ideal and actual results. Woodworth divided movement procedure into two parts, namely, motor plan and control (Woodworth, 1970). In the planning phase, it created motor plan before actual movement and was helpful to activate movement. In the controlling phase, it could correct the movement error in time. According to the planning-control model (Glover and Dixon, 2001), in humans, the generation of motor planning depends on the cues around the target, whereas planning does not adjust or control behavior once the behavior is initiated. Previous research has focused primarily on vision as the dominant sensory modality that affects other sensory modalities. However, when a multisensory system exists, that is, when numerous sensory modalities are available and integrated by the central nervous system, a perceptual experience may be generated in the absence of a given sensory modality as other modalities become enhanced, an effect known as inverse effectiveness (Stein and Stanford, 2008). For example, a cup of white wine but colored red was described as a cup of red wine by tasters (Morrot et al., 2001). To a certain extent, it reflected the superiority of vision in the integration of vision and olfactory. Not only that, but several previous studies have found that the visual characteristics of the object, such as color and shape, can affect the individual’s olfactory functions of detecting, discriminating, and recognizing (Jay and Dolan, 2003; De Araujo et al., 2005; Dematte et al., 2008; Robert et al., 2010). When visual information is ambiguous, olfactory information can modulate the visual modality. It has been shown that olfactory information can modulate visual attention to point participants to a target that is congruent with the olfactory information (Chen et al., 2013). In addition, odor cues can evoke a perceptual change when a human is judging an unclear movement of a target (Kuang and Tao, 2014). They investigated how odors affect the direction of movement. When the directional perception was ambiguous, the olfactory information was significantly related to it and integrated the effects with visual information.

An odor stimulus can also be a cue that enters the processing system and influences motor behavior in humans. For example, during performing a word recognition task in a room scented with air fresher, the response to words such as cleaning and tidying up was faster than to that of other non-cleaning-related words (Holland et al., 2005). In addition, participants who were given biscuits as a reward cleaned a room more frequently than the control group that did not receive biscuits. When researchers investigated customer motivation and behavior, they concluded that providing certain ambient odors increases the time customers spend in a store (Vinitzky and Mazursky, 2011). A pleasant smell in a space will provide a better evaluation and memory of an experience than a bad smell (Cirrincione et al., 2014; Doucé et al., 2014). Compared with providing odorless air, diffusing pleasant odors, such as orange, seawater, or mint, also produces similar results (Schifferstein et al., 2011). Thus, several lines of evidence have suggested that olfaction can influence human planning and behavior.

Reach-to-grasp movements are typical for humans to handle objects. Such movements assist the human body in interacting with the environment during early childhood and in establishing perception of past experience for adults. For example, babies can grasp an apple, and we can lift a dumbbell. Hence, how humans modulate hand movements on the basis of sensory information is important. Most previous studies focused on the effects of semantic perception and quantity perception on grasping movement. Glover put a grape or apple label on the target and asked participants to grasp it. In the early stage of a grasping movement, the size of the grip aperture when grasping the apple object was larger than that when grasping the grape target, but as the distance between the hand and the target shortened, visual feedback adjusted this difference until it disappeared (Glover et al., 2004). Humans need to collect information about related properties, spatial position, and so on to complete a reach-to-grasp movement. One property that influences information collection is olfactory information, with different odor cues affecting the reaction and movement velocity of humans to a target. For example, representations evoked by olfactory information have been demonstrated to affect the size of a hand grip (Castiello et al., 2006). When an odor cue represents an object larger than the target to be grasped, the amplitude of the peak grip aperture is greater and the movement duration (MD) is longer than when the odor cue represents an object that is small (Parma et al., 2011). Conversely, when the odor cue represents a small object, the amplitude of the peak grip aperture for a large target to be grasped is smaller and the MD is shorter but the movement time is longer. Only when the odor cue represents a small object and the target to be grasped is also small was the hand movement planning reaction promoted. There is no difference when the odor cue represents a large object. When a target is large and an odor cue represents a small object, the maximum aperture (MA) is smaller and the MD is longer than when the odor cue represents a large object. This is due to the hand grip adjusted by visual feedback at the later stages of execution processing (Tubaldi et al., 2009). Interestingly, fruit juice can also make the same result when grasping a large target (Parma et al., 2011). These indicate that people can adjust their hand movement by olfactory and chemical senses. Using transcranial magnetic stimulation, researchers found that the participants’ hand motor potential is increased when the odor of food is congruent with the target (Rossi et al., 2008).

Multisensory integration shows that individual can use lots of sensory information such as visual and auditory information to perceive (Ernst and Bülthoff, 2004). The integration of visual and olfactory information also exists and affects how to adjust movement. The odor cues can evoke a perceptual change when a human is judging an unclear movement of a target. However, the aforementioned studies are narrow in focus in that they examine only the effects of olfactory information on the motor system; in addition, the results of these studies have led the authors to incongruent conclusions. On the one hand, olfactory information really affects a reach-to-grasp planning on the basis of our review such as reaction time (RT) and also affects execution such as MD. On the other hand, an interaction between visual and olfactory systems has yet to be clear, and it is unknown how interactions affect hand movement. To advance the understanding of multisensory integration and optimization of movement performance, the purpose of the present investigation was to explore the integration between visual and olfactory stimuli on movement. If a preceding olfactory information influences a human collecting information about a target, then there should be an effect on hand movement kinematics that should be measurable. We hypothesized that a reach-to-grasp hand movement would be enhanced when the odor cue was congruent with the visual target. The olfactory information provides right cues to make a movement plan, and the visual feedback may help us to control actions. That promotes participants’ RT and MD. By contrast, if the size of odor-cued object (OCO) and visual target were incongruent, interference effects would exist. Participants may make the wrong plan and waste time to adjust to the execution. We furthermore hypothesized that visual feedback can help them to correct the movement error. Thus, the specific aims of present study were to explore the effects of olfactory information on the execution of the reach-to-grasp movement and to compare the behavioral differences associated with visuo-olfaction integration.

Materials and Methods

Participants

In total, 29 young adults (15 women and 14 men) between 18 and 25 years of age (mean, 23.03 ± 1.69 years) participated in this experiment. Before starting the experiment, all participants answered a questionnaire about their history of nasal disease, smoking, exercise, and previous subjective status of olfactory function. All participants reported normal or corrected-to-normal vision as well as normal smell abilities, and all were right-handed. All eligible participants underwent the Sniffin’ Sticks test, and all showed good olfactory function and odor recognition. This test consists of 16 standardized odor pens (Burghart Messtechnik Company, Germany) that are presented to the participants. Participants identify the smell using a forced choice of four alternatives (one is correct and three are distractors). Each pen is held approximately 2 cm away from the nose. The interval between presentation of the odor pens is at least 30 s (Hummel et al., 1997).

Participants were naive to the purpose of the present experiment. Each participant’s role in the experiment was approximately 1.5 h. Participants were financially compensated for their participation once they had completed the experiment. The experimental procedures were conducted in accordance with the recommendations of the ethics committee of the Sport of Psychology Department at Shanghai University of Sport, which approved the protocol for this study. Written informed consent was obtained from each participant in accordance with the Sport of Psychology Department at Shanghai University of Sport.

Apparatus and Stimuli

In consideration of ecological validity, the targets consisted of four real fruits or food that were grouped based on their relative sizes: small (garlic and ginger) and large (apple and orange). The targets were selected so that their visual features and size would be similar throughout the experimental period. The reach-to-grasp motion of the small targets required a precision grip, with the thumb pressed in opposition to the index finger. The large targets required a power grip, with the fingers flexed against the palm. The odor stimuli were congruent with the targets, that is, ginger, garlic, apple, and orange. The ginger odor was selected from the extended identification test, and others were from the original Sniffin’ Sticks (Hummel et al., 1997; Jessica et al., 2012). The scents were delivered by placing the odor stick approximately 2 cm from both nostrils of each participant in a well-ventilated room (Burghart Messtechnik Company, Germany).

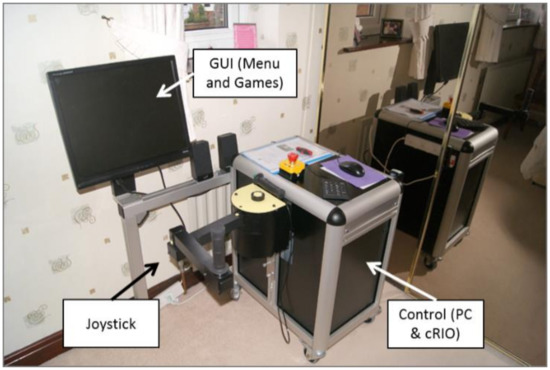

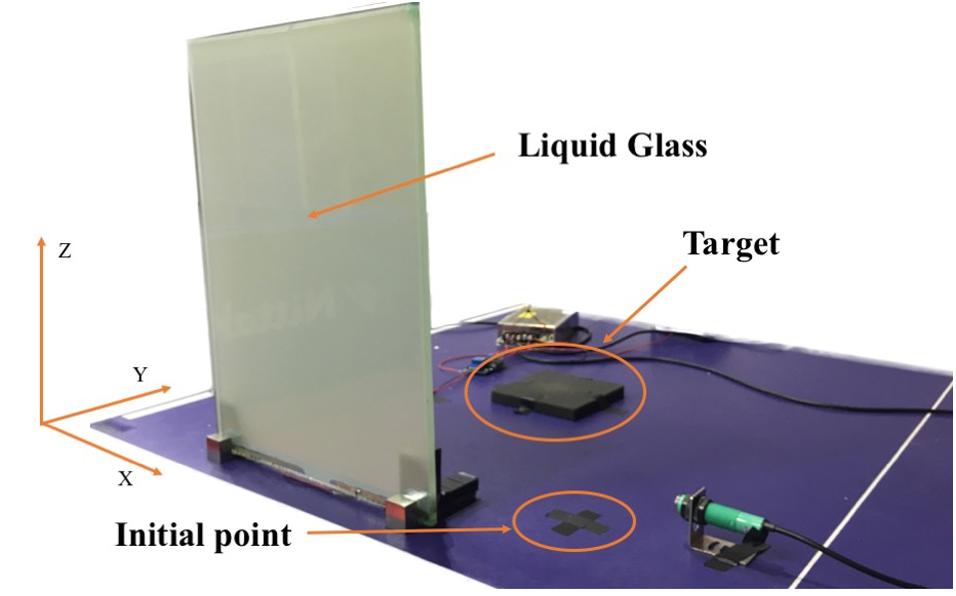

Vision was controlled using a liquid glass (polymer-dispersed liquid-crystal) screen that rendered the target visually accessible by changing from translucent to transparent in 1 ms. We set an infrared automatic sensor switch to change the liquid glass. It changed the liquid glass from opaque to transparent, which represents a start signal. Visual feedback was provided under two conditions: open loop and closed loop. For the open-loop condition, participants received no visual feedback, whereas in the closed-loop condition, they were provided visual feedback. According to planning-control model, the entire grasping process consists of two stages: motor planning and online controlling. The grasping aperture influenced by the size of OCO mainly occurs in the previous stage. As the grasping movement progressed, visual feedback in a closed loop corrected the hand performance. But in an open loop, the purpose of isolated vision was to remove the online correction, so as to investigate the impact of the size of OCO modulate hand movement.

The target to be grasped was placed along the midline of the participant’s body and 20 cm away from an initial point on the laboratory bench (see Figure 1).

Figure 1. Image of the experimental setup.

[…]