Posts Tagged Neurorehabilitation

[Review] Brain–Computer Interfaces for Upper Limb Motor Recovery after Stroke: Current Status and Development Prospects – Full Text

Posted by Kostas Pantremenos in Paretic Hand on January 17, 2024

Brain–computer interfaces (BCIs) are a group of technologies that allow mental training with feedback for post-stroke motor recovery. Varieties of these technologies have been studied in numerous clinical trials for more than 10 years, and their construct and software are constantly being improved. Despite the positive treatment results and the availability of registered medical devices, there are currently a number of problems for the wide clinical application of BCI technologies. This review provides information on the most studied types of BCIs and its training protocols and describes the evidence base for the effectiveness of BCIs for upper limb motor recovery after stroke. The main problems of scaling this technology and ways to solve them are also described.

Introduction

Brain–computer interface (BCI) is a technology that allows to convert data on the electrical or metabolic activity of the brain into control signals for an external technical device. In post-stroke rehabilitation, BCI is used to provide feedback to a patient during motor imagery training [1–3]. The scientific justification for this method has been the data on the positive effect of the motor imagery process on neuroplasticity due to activation of motor structures of the central nervous system (CNS) [4–8]. By providing feedback during motor imagery, the BCI systems enhance the effectiveness of such training sessions [9]. In general, training with the use of the BCI technology in patients after stroke includes the following processes: a patient is asked to mentally perform a movement of the paralyzed limb; the BCI technology using non-invasive sensors records brain signals accompanying the mental performance of the task; in real time, these signals are recognized and converted into a control command for an external device; the patient is provided with feedback on the quality of the mental task performance using the external device [10].

To date, at least 20 randomized controlled trials (RCTs) on the use of BCI for upper limb motor recovery after stroke are known worldwide, and 11 systematic reviews, 8 of which are accompanied by a meta-analysis, have been published on this topic between 2019 and 2023 [11–21]. Foreign and domestic manufacturers have developed several medical devices for use in clinical practice of post-stroke rehabilitation [22–25].

In Russia, clinical trials of BCI after stroke first began in 2011 at Research Center of Neurology (Moscow, Russia) [26, 27]. In a subsequent multicentre RCT, it was shown that a course of training with the BCI–exoskeleton complex improved the rehabilitation results of patients with focal brain damage in terms of hand motor recovery [28]. The proven technology was subsequently registered as a medical device and is currently used in a number of clinical centres [24, 29].

Despite the extensive evidence base and the availability of ready-made BCI technologies, there are currently some limitations to their widespread use in post-stroke rehabilitation, and further research and development is underway [30–37].

The aim of this review is to analyse scientific articles devoted to the study of the use of BCI technologies in post-stroke upper limb paresis, to outline the main problems and prospects for further development in this field.

Literature search methodology

Articles from peer-reviewed, full-text, open access scientific journals on the use of non-invasive BCIs for upper limb motor recovery after stroke were selected for analysis. The search query was formulated according to the rules of the MEDLINE bibliographic database: ((brain–computer[tiab] OR brain–machine[tiab] OR neural interfac*[tiab]) OR “Brain–Computer interfaces”[Mesh]) AND stroke[mh] AND (upper extremity[tiab] OR hand[tiab] OR arm[tiab]). Additionally, a literature search was conducted in the eLIBRARY.RU system using the key words “brain–computer interface”, “neurocomputer interface”, “neurointerface”. The date of the search was July 3, 2023.

Varieties of brain–computer interface systems and their application after stroke

All BCIs used in research or in the practice of post-stroke rehabilitation have distinctive features (see the Figure). The training protocols and BCI models studied in RCTs differ in the control paradigm of the interface, the type of signal recorded, the online signal processing algorithm, and the type of external technical device for providing feedback.

[ARTICLE] Increasing the delivery of upper limb constraint-induced movement therapy programs for stroke and brain injury survivors: evaluation of the ACTIveARM project – Full Text

Posted by Kostas Pantremenos in Constraint induced movement therapy CIMT, Paretic Hand on January 7, 2024

Abstract

Purpose

To increase the number of constraint-induced movement therapy (CIMT) programs provided by rehabilitation services.

Methods

A before-and-after implementation study involving nine rehabilitation services. The implementation package to help change practice included file audit–feedback cycles, 2-day workshops, poster reminders, a community-of-practice and drop-in support. File audits were conducted at baseline, every three months for 1.5 years, and once after support ceased to evaluate maintenance of change. CIMT participant outcomes were collected to evaluate CIMT effectiveness and maintenance (Action Research Arm Test and Motor Activity Log). Staff focus groups explored factors influencing CIMT delivery.

Results

CIMT adoption improved from baseline where only 2% of eligible people were offered and/or received CIMT (n = 408 files) to more than 50% over 1.5 years post-implementation (n = 792 files, 52% to 73% offered CIMT, 27%–46% received CIMT). Changes were maintained at 6-month follow-up (n = 172 files, 56% offered CIMT, 40% received CIMT). CIMT participants (n = 74) demonstrated clinically significant improvements in arm function and occupational performance. Factors influencing adoption included interdisciplinary collaboration, patient support needs, intervention adaptations, a need for continued training, and clinician support.

Conclusions

The implementation package helped therapists overcome an evidence-practice gap and deliver CIMT more routinely.

IMPLICATIONS FOR REHABILITATION

- Constraint induced movement therapy (CIMT) is a highly effective intervention for arm recovery after acquired brain injury, recommended in multiple clinical practice guidelines yet delivery of CIMT in practice remains rare.

- A multifaceted implementation package including clinician training workshops, a community of practice, drop in support and regular audit and feedback cycles improved delivery of CIMT programs in practice by neurorehabilitation teams.

- Stroke survivors and people with brain injury who received a CIMT program in usual practice demonstrated clinically important improvements in arm function, dexterity and occupational performance.

Introduction

Constraint-induced movement therapy (CIMT) is a recommended intervention for arm recovery after stroke and acquired brain injury in multiple clinical practice guidelines [Citation1–4]. A systematic review of 44 randomised controlled trials reported positive effects on arm motor function, arm-hand activities, self-reported amount of arm-hand use and quality of arm-hand movement in daily life (effect sizes from <0.2 to 0.8) [Citation5], yet delivery of CIMT in practice remains limited [Citation6]. An international survey of therapists who reported using CIMT, found CIMT programs were being offered but not always delivered with fidelity, and most therapists offered CIMT programs only once or twice annually (n = 99, 58.6%) [Citation7]. Therapists who routinely deliver CIMT report factors such as organisational support and access to resources and training enable implementation and sustainability [Citation8].

Adoption of CIMT into routine practice (i.e., where CIMT is offered and delivered to all eligible and consenting patients) remains a significant evidence–practice gap. Only 11% of eligible stroke survivors received CIMT in 2020 during their rehabilitation in Australia [Citation6]. Strategies that support implementation include CIMT education and training, written resources, ongoing coaching, mentoring, and modelling from a senior therapist [Citation9,Citation10]. This highlights a need for active support beyond initial education and training to facilitate CIMT implementation and maintenance in routine practice. Thus, effective strategies are needed to help therapists adopt, offer, and routinely deliver CIMT to eligible patients. To address this evidence-practice gap, we developed a staff behaviour change intervention as part of the Australian Constraint Therapy Implementation study of the Arm (ACTIveARM), described in detail elsewhere [Citation11].

There is a need to evaluate the impact of behaviour change interventions, such as ACTIveARM, on the delivery of evidence-based interventions, in this case, CIMT programs. The impact can be measured via patient outcomes and clinician behaviour change [Citation12]. Comprehensive evaluation can also identify additional team and organisational factors that may influence outcomes if the behaviour change intervention was to be scaled up to other organisations.

RE-AIM is an evaluative framework that identifies the essential elements needed for sustained adoption and implementation of evidence-based interventions [Citation13]. The framework comprises five key dimensions: Reach to the target population; Effectiveness of the evidence-based intervention; Adoption of the intervention by staff, settings or institutions; Implementation of the intervention including program adaptations, fidelity, costs, and consistency of program delivery; and Maintenance of the intervention effects, both maintenance of patient outcomes (individual level) and routine delivery of the intervention in practice over time (setting level) [Citation13]. The RE-AIM framework can be used to evaluate the effectiveness of implementation strategies, and the scale-up of such strategies and interventions to other settings [Citation14], and was used in the ACTIveARM study.

The aim of this study was to evaluate whether the ACTIveARM implementation package, a theoretically-informed behaviour change intervention, could change therapist behaviour and increase the number of CIMT programs offered and delivered to eligible stroke and brain injury patients.

The primary research question related to RE-AIM dimensions of Adoption and Maintenance (setting level):

Q1. Is there an increase in the number and proportion of eligible patients who are offered a CIMT program after therapists receive the ACTIveARM implementation package (Adoption), and is this practice change sustained (Maintenance – setting level)?

Secondary research questions related to the Reach, Effectiveness, Implementation, and Maintenance (individual and setting levels) of CIMT programs in practice were:

Q2. Is there an increase in the number and proportion of eligible patients who are provided with a CIMT program after therapists receive the ACTIveARM implementation package (Reach), and is this practice change sustained (Maintenance – setting level)?

Q3. Do patients with stroke and traumatic brain injury who complete a CIMT program achieve upper limb outcomes consistent with published outcomes (Effectiveness), and are these effects sustained (Maintenance – individual level)?

Q4. How consistently is CIMT delivered across settings and staff, including adaptations to improve feasibility in public health settings? (Implementation)

Q5. What supports are needed by therapy teams to sustain changes in their practice, and continue to offer and deliver CIMT programs as part of routine care (Maintenance – setting level)? […]

[ARTICLE] Unsupervised robot-assisted rehabilitation after stroke: feasibility, effect on therapy dose, and user experience

Posted by Kostas Pantremenos in Paretic Hand, REHABILITATION, Rehabilitation robotics on January 7, 2024

Abstract

Background

Unsupervised robot-assisted rehabilitation is a promising approach to increase the dose of therapy after stroke, which may help promote sensorimotor recovery without requiring significant additional resources and manpower. However, the unsupervised use of robotic technologies is not yet a standard, as rehabilitation robots often show low usability or are considered unsafe to be used by patients independently. In this paper we explore the feasibility of unsupervised therapy with an upper limb rehabilitation robot in a clinical setting, evaluate the effect on the overall therapy dose, and assess user experience during unsupervised use of the robot and its usability.

Methods

Subacute stroke patients underwent a four-week protocol composed of daily 45 minutes-sessions of robot-assisted therapy. The first week consisted of supervised therapy, where a therapist explained how to interact with the device. The second week was minimally supervised, i.e., the therapist was present but intervened only if needed. After this phase, if participants learnt how to use the device, they proceeded to two weeks of fully unsupervised training. Feasibility, dose of robot-assisted therapy achieved during unsupervised use, user experience, and usability of the device were the primary outcome measures. Questionnaires to evaluate usability and user experience were performed after the minimally supervised week and at the end of the study, to evaluate the impact of therapists’ absence.

Results

Unsupervised robot-assisted therapy was found to be feasible, as 12 out of the 13 recruited participants could progress to unsupervised training. During the two weeks of unsupervised therapy participants on average performed an additional 360 minutes of robot-assisted rehabilitation. Participants were satisfied with the device usability (mean System Usability Scale scores > 79), and no adverse events or device deficiencies occurred.

Conclusions

We demonstrated that unsupervised robot-assisted therapy in a clinical setting with an actuated device for the upper limb was feasible and can lead to a meaningful increase in therapy dose.

Participant performing a therapy exercise with the ReHapticKnob. To train with the device, participants need to fix their fingers to the handles with Velcro straps and log in to their therapy account with the fingerprint reader. A pushbutton keyboard is used to interact with the device and the virtual environment displayed on the screen. The view of the hand (visual feedback) is blocked by the hand cover, as the exercises require users to focus on the sensory feedback from the affected hand to solve the different tasks.

[BLOG POST] Robotic neurorehabilitation for the upper limb – new insights

Posted by Kostas Pantremenos in Neuroplasticity, Paretic Hand, Rehabilitation robotics on November 13, 2023

Authors: Mihaela Molnar, Oana Vanta

1 Introduction | Robotic neurorehabilitation for the upper limb

2 The importance of robotic neurorehabilitation

4 A brief introduction to wristbot

5 Latest advances in neurorehabilitation involving robotic devices

6 Multidisciplinary evaluation of recovery – assessment of sensorimotor performance

7 The promises of robotic devices in neurorehabilitation

Introduction | Robotic neurorehabilitation for the upper limb

Chronic and incapacitating neurological conditions significantly burden families and society [1]. Brain injuries and other neurological pathologies can negatively impact a patient’s life quality by causing motor or sensory loss or dysfunction [2]. One of the leading causes of mortality and disability around the globe is stroke [3]. After a stroke, patients frequently have sensorimotor impairments, the upper limb is affected in more than two-thirds of affected individuals, and half of them experience a persisting loss of arm function [2].

Spasticity in the upper extremities affects 17% to 40% of stroke survivors, making it harder for them to perform activities of daily living (ADL). Upper-limb therapy is essential in the first six months following the stroke because, after that time, stroke survivors’ motor and ADL recovery diminishes. After six months following a stroke, 33% to 66% of individuals do not regain functional use of their upper extremities [3].

The importance of robotic neurorehabilitation

Innovative robotic devices have been created over the past few decades to assist physicians in neurorehabilitation [2]. Traditional post-stroke rehabilitation methods include:

- “hands-on” therapy (manual therapy techniques)

- constraint-induced movement therapy

- repetitive task training

- mirror therapy.

They frequently call for patients to manually perform a partial or full-assisted movement in arm/hand joints while being observed by therapists.

Robot-assisted treatment

The cost-effectiveness of traditional therapeutics has been constrained by how labor- and time-intensive they are. Robotic devices are used in robot-assisted treatment (RT), a revolutionary post-stroke rehabilitation strategy, to give patients motor or task-oriented training. Stroke survivors can perform independent training with less supervision from therapists, receive timely feedback on their performance from robotic devices, and achieve better adherence to treatment with the introduction of games or interactive upper-limb tasks, in addition to providing repetitive and high-intensity training in a cost-effective manner [3].

Robotic neurorehabilitation is appealing due to its ease of deployment potential, adaptability to various motor impairments, and excellent measurement reliability [4]. One of the major objectives of rehabilitation for people with neurological diseases is to increase mobility. High dosage and intensity, sufficient practice, specific objectives, motivation, and specialized expertise are all crucial for improving outcomes in neurorehabilitation [1].

Robotic-assisted gait training (RAGT), which may deliver a stronger treatment dosage than standard rehabilitation, is anticipated to increase mobility more successfully than conventional therapy [1]. In this application sector, “robotic technology” refers to any mechatronic device with a certain level of intelligence that may physically influence the patient’s behavior to optimize and improve his or her sensorimotor rehabilitation [2].

These robots have two main functions:

- Evaluate human sensorimotor function

- Retrain the human brain to enhance the quality of life [2].

Therefore, based on the low-level control method and every patient’s remaining abilities, each robotic device provides a pre-defined training mode. Both passive training (robot-driven, position control technique), where the robot imposes the trajectories, and active training (patient-driven), where the robot modifies its trajectory in response to the subject’s will to move, are often implemented by rehabilitation devices.

Riccardo Iandolo et al. indicate the fact that the supportive training modality, however, is the most pertinent of all the other training methods. In the active assistive training mode, the assistive controllers, which mimic the traditional physical and occupational therapy approach, aid participants in moving their impaired limbs into the proper positions when grabbing, reaching, or walking [2].

Particularly, the assistance-as-needed approach is one of the assistive techniques frequently used since it lowers the chance that the patient would only rely on the robot to complete the rehabilitation job.

On the other hand, Riccardo Iandolo et al. point to the fact that over-assistance may, in fact, reduce engagement and, hence, the potential for inducing neuroplastic alterations [2]. The “Slacking” effect, for example, develops when a patient undergoes repeated passive limb mobilization, which is defined as a decline in voluntary movement control. Challenge-based controls are used to make tasks more challenging or stimulating in addition to the assistance-as-needed strategy to stop the ‘slacking’ effect [2].

The most effective use of currently available technologies or gadgets would be a major study emphasis, even if numerous studies are concentrating on developing new ones. This might be accomplished by developing effective training techniques and implementing realistic control and assessment methods [2].

In order to optimize the potential of neurorehabilitation, robotic treatment must be combined with other disciplines, including computational neuroscience, motor learning and control, and bio-signal processing, among others [2].

ROBOTIC DEVICES USED IN UPPER LIMB MOTOR NEUROREHABILITATION

A therapy robot can be referred to as a multipurpose manipulator that can be reprogrammed and used for various rehabilitation tasks. Therefore, it would be feasible to put up a robotic system that moves the arm and hand to conduct this function, similar to locomotion robots used for gait therapy. In contrast to the lower extremity, there is no established typical motion pattern for the upper extremity, where a particular gait pattern may be identified. It requires a distinct approach due to the intricacy of the arms and hands and the wide range of motion patterns that are accessible [5].

Based on the different forms of physical human-robot interaction, there are two primary categories of robotic devices for neurorehabilitation:

- End-effector devices;

- Exoskeletons [2];

End-effector-based systems are robotic devices equipped with an exclusive interface that mechanically restrains the distal portion of the human limb, which is directly controlled; the rest of the kinematic chain is free, and the human limb has to adjust to external disturbances or movements performed by the end-effector robot [2].

Exoskeletons faithfully mimic the kinematics of the human limb and support its motion by manipulating the position and orientation of each joint. Additionally, the number of actuated joints and the range of motion (ROM) are properly selected to maximize control, resulting in close monitoring of the patient’s motion but at the cost of a higher complexity for the control of degrees of freedom (DOFs) [2].

In the past years, numerous robotic systems and protocols have been created based on task-oriented repetitive motions for the improvement of:

- ROM;

- Muscular strength;

- Movement coordination;

- Motor learning [2].

Based on the nature and degree of motor dysfunction and associated disability, one type of device may be more effective than the other; for example, exoskeletons may be more suitable to deliver forces to each joint if the patient has very low remaining sensorimotor functionality [2].

The following table exemplifies some neurorehabilitation devices (Table 1) [2,6].

| End-effector robotic devices | |

| MIT Manus | designed for the shoulder and elbow joints |

| ARM (Assisted Rehabilitation and Measurement) Guide | a counterbalanced robot that assists the reaching motion mechanically without loading the arm |

| GENTLE/s (Robotic assistance in neuro and motor rehabilitation) | |

| Italia NeReBot (Neurorehabilitation Robot) | mainly utilized to quantify aberrant joint torque coupling in chronic stroke |

| ACT (Arm Coordination Training Robot) | mainly utilized to quantify aberrant joint torque coupling in chronic stroke |

| Mirror Image Motion Enabler | implement bimanual training protocols |

| Bi-Manu-Track | implement bimanual training protocols |

| Exoskeletons devices | |

| SUEFULARMin IIICADEN (Cable-Actuated Dextrous Exoskeleton for Neurorehabilitation) RUPERT (Robotic Upper Extremity Repetitive Trainer) | Provide shoulder and elbow joint motion |

| Manovo PowerARMEO PowerIntelliArm exoskeleton | Can implement movements like hand opening and closing, grasp movements, fingers passive stretching |

Table 1. Examples of exoskeletons and end-effector devices ( available from [2,6])

In contrast to end-effector devices, upper limb exoskeletons for rehabilitation have recently been created due to the following:

- the intricate interaction between the mechanical structure of exoskeletons and the various joints in the human body,

- the intricate control schemes that must be adopted to deal with transparency and back-drivability,

- the requirement to promote patient sensorimotor recovery without passively moving the patient’s joints by using assistive training modalities capable of responding to any pathological movement [2].

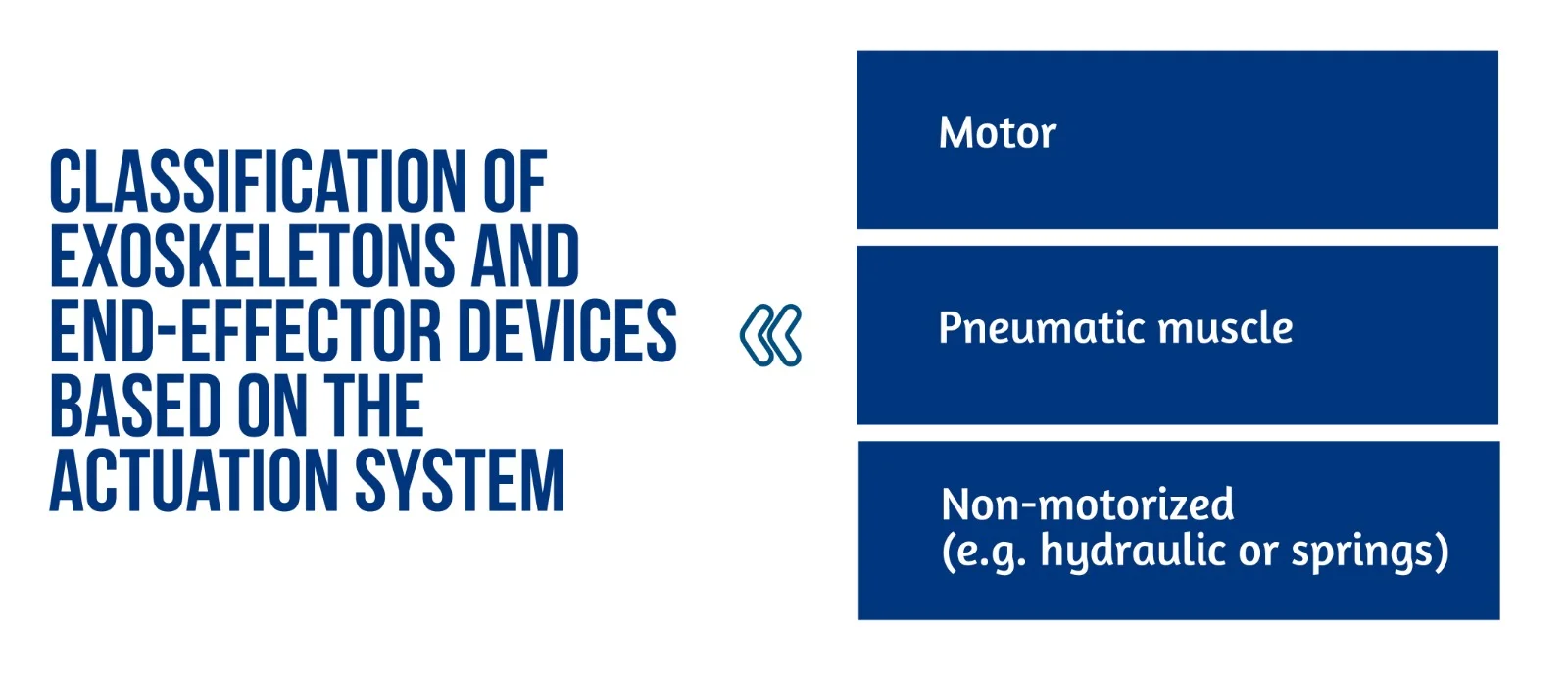

Figure 1. Classification of exoskeletons and end-effector devices based on the actuation system

Several innovative control schemes for exoskeletons have recently been designed to improve inter-joint coordination [2].

End-effector and exoskeleton devices (Figure 1) implemented a closed-loop feedback control with feedforward components. This approach allows for correcting patients’ performance faults and compensation for the device mechanics’ weight, inertia, and friction. Furthermore, the robot model might generate the feedforward components or learn iteratively. This control method is typically used in exoskeletons, where position data is used to close the loop. The interaction control framework is also used to implement assistive methods. Most end-effector devices, in particular, use impedance control techniques, whereas exoskeletons use admittance control schemes [2].

The exoskeleton can function in three modes: passive (robot-driven), active (patient-driven), and challenge (robot resists applied force). A robot may also oppose the patient’s movement to make things more difficult for the patient [6]. Sliding mode controllers or controllers activated by the patient’s intention detection estimated by electrophysiological measures (e.g. surface electromyography – sEMG) and electroencephalography – EEG) have been developed in recent years for upper limb exoskeletons.

All of the approaches described above are based on comparing an error signal to a reference trajectory which may be easily estimated or created with end-effector devices; however, exoskeletons present several challenges. As previously stated, exoskeletons can restore patient inter-joint coordination by appropriately adjusting the various robot joint trajectories. Nevertheless, the relationship between recovery and exoskeleton trajectories remains unknown [2].

Recently proposed approaches include:

- Reproducing previously recorded trajectories made by healthy participants;

- Using previously recorded pathological involuntary joint torques translated in the joint kinematics domain [2].

A brief introduction to wristbot

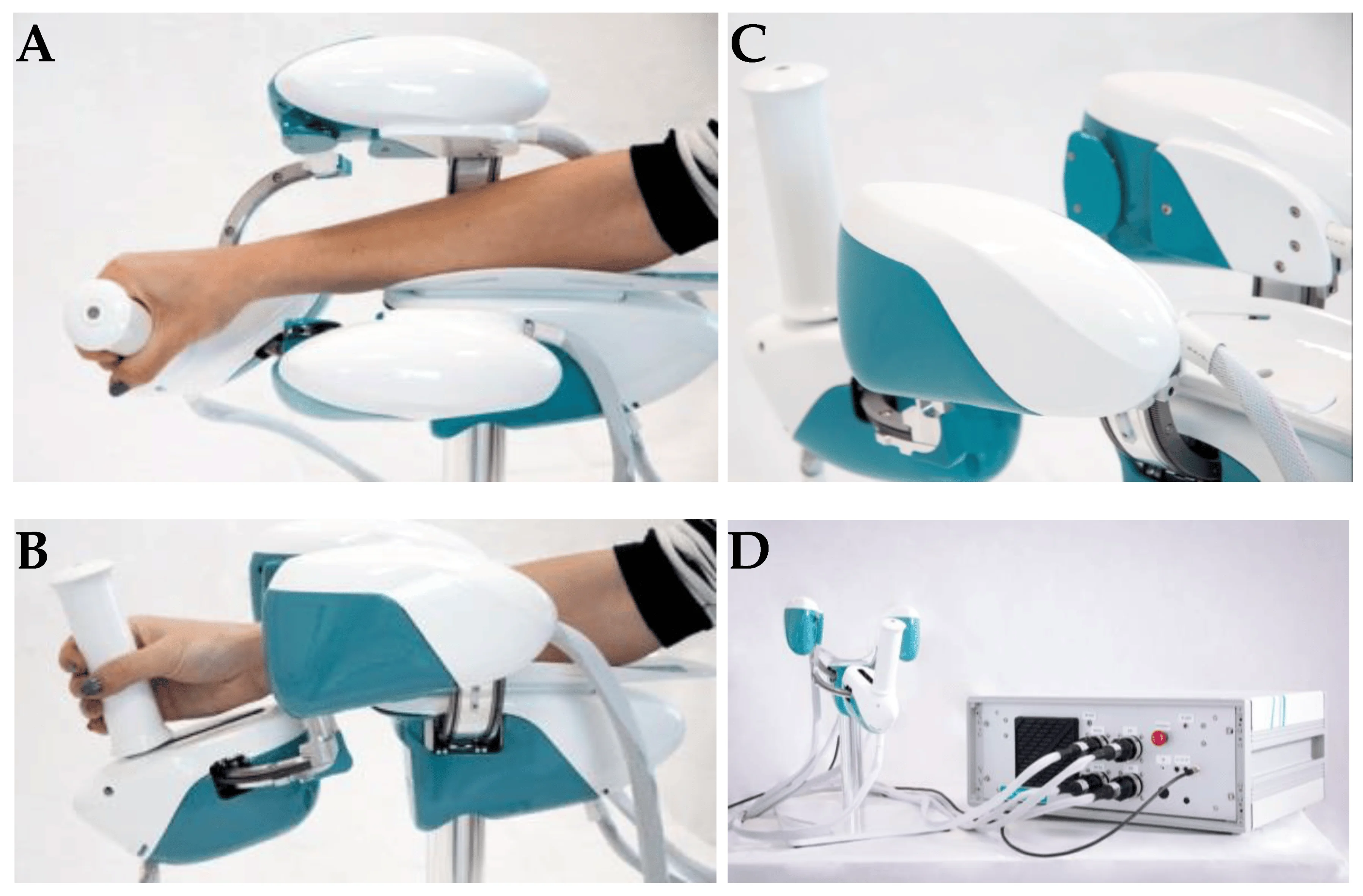

WristBot (see Figure 2) is a robotic end-effector device intended for the neurorehabilitation of individuals who suffer neurological or orthopedic diseases allowing movements of flexion/extension in radial or ulnar deviation and pronation or supination. It has four brushless motors that allow it to guide and aid wrist motions in the three planes stated above. These motors are chosen to give precise haptic representation while compensating for the device’s weight and inertia, allowing free and smooth motions [2].

Figure 2. Wrist robot. (A) and movements in the radial-ulnar deviation DOF (degrees of freedom) (B); posterior-lateral view of WristBot’s handle (C); and frontal view of the device connected to the case, with the integrated PC and electronic control unit (D) (available from [2])

The key benefit of the WristBot is its highly customized therapy, provided by its versatility and programmability. Additionally, the device’s quantitative functional evaluation is a helpful tool to aid physicians in selecting the best course of treatment [2].

Latest advances in neurorehabilitation involving robotic devices

The neurorehabilitation process greatly benefits from robotic training. In fact, the devices may be programmed to execute several training options depending on motor learning paradigms and/or brain control. Robots can also read out information on movement performance with accuracy and precision and offer highly repeatable, exact, and reproducible movements (as dictated by forces and torques). Riccardo Iandolo et al. highlights that a suitable rehabilitation training regimen must be carefully created to make the most use of rehabilitation robots for patient care. To do this, a tremendous amount of research has been undertaken in recent years to fully understand the many mechanisms by which people (re)acquire (or relearn) a motor skill [2].

Planning an effective robot-based treatment to promote sensorimotor rehabilitation requires a thorough understanding of how the brain governs movement and which applied processes are used to learn new abilities [2].

A key factor in facilitating motor learning is motor variability. Variability was once regarded as “noise” that the brain needed to eliminate when learning, but recent research has begun to recognize its significance in developing motor abilities [2].

The benefits of receiving visual feedback from seeing someone else do an action have been amply established to enhance motor learning. According to recent research, respondents learn better when they see a video of someone executing reaching actions in a complex setting before performing the activity on their own. Motor and sensory learning are tightly connected, in the same manner, that motor learning influences sensory networks and sensory learning alters motor regions [2].

BCIs (Brain Computer Interfaces) were first designed as non-invasive communication aids, but their intrusive counterpart, commonly referred to as brain-machine interfaces (BMIs), seek to restore some degree of motor function in patients who were entirely paralyzed or severely disabled. Recent studies demonstrate how BCIs and BMIs may be effectively used to improve the results of a neurorehabilitation intervention: these systems advocate for help that mirrors the user’s motor intention, leading to greater cortical modifications.

The findings open the door to innovative, tailored therapies where closed-loop decoding of brain activity is crucial for enhancing sensorimotor repair [2].

Multidisciplinary evaluation of recovery – assessment of sensorimotor performance

The current clinical approach for assessing movement disorders mainly comprises qualitative assessments made by human operators using clinical scales, as shown in Table 2 [2].

| CLINICAL RATING SCALES | |

| Quality of Upper Extremity Skills Test (QUEST) | Assesses movement patterns and hand function |

| Modified Ashworth Scale (MAS) | Assesses the spasticity of the upper limb |

| Fugl–Meyer Assessment (FMA) | Evaluates sensorimotor impairments |

| Melbourne Assessment of Unilateral Upper Limb Function (MAUULF) | Evaluates the quality of the movements |

| Box and Block Test (BBT) | Evaluates gross manual dexterity |

Table 2. Clinical rating scales

The following tests evaluated position sense acuity, detection of passive motion, and kinesthesia: Nottinghan Sensory Assessment, Rivermead Assessment of Somatosensory Performance, and Joint Position Matching test [2].

Sensorimotor performance can also be measured via brain and/or muscle activity recordings such as high-density electroencephalography (hdEEG) recordings, source imaging methods that have lately enabled the accurate reconstruction of resting-state networks in the brain, and assessing of electrophysiological sub-cortical activity [2].

Functional connectivity (FC) represents the relationship between separate brain areas and changes after proprioceptive training with a robotic device. Moreover, FC predicts the behavioral outcomes of rehabilitation protocols and motor function recovery in stroke patients and corresponds with the extent of clinical impairment in patients with early relapsing-remitting multiple sclerosis [2].

The promises of robotic devices in neurorehabilitation

Because motor impairment is the common denominator of all functional motor disorders, focusing rehabilitation efforts on the restoration of impairment rather than compensation could be more beneficial in the acute and subacute stages of recovery. High intensity (i.e., dose per unit time), high dosage, and realistic movement training in these three dimensions are important factors influencing the impact of rehabilitation [4].

Robotic neurorehabilitation equipment may increase the quality of the quantitative evaluation, which is also necessary to comprehend better and infer the rehabilitation therapy’s effect on sensorimotor function. Robotic measures have the potential to exceed human-administered clinical scales and are only limited by the robot sensors’ performance [2]. Robots provide a more exact evaluation of both initial impairment and impairment changes in response to therapy regarding movement kinematics and dynamics [4].

Conclusion

Future research should concentrate on creating novel mechatronic structures and improved control algorithms. Exoskeletons must cope with a high level of mechanical complexity: they must be portable, lightweight, efficient, and compliant while also properly supporting the patient, even in the presence of severe impairments. In order to control costs throughout the design of a device, it is essential to keep in mind the specific disability the device is being developed to choose just the most crucial developmental goals [2].

Through the advancement of robotic scales and the incorporation of measurements with biosignals, sensorimotor performance, and recovery may be comprehensively evaluated. Researchers propose that new methods based on multimodal evaluation methodologies should be used to leverage unique and/or composite indications. This may result from understanding how to provide the greatest tools to improve motor recovery and neuroplasticity [2].

The study of Riccardo Iandolo et al. [2] highlights the importance of patient evaluation regarding rehabilitation intervention to increase its effectiveness. When building a tool to help a certain disorder, it is critical to consider the end perspective, as the user’s collaborative effort would almost certainly result in a proper treatment [2].

Robots provide movement controllability and measurement reliability, making them excellent tools for neurologists and therapists in addressing neurorehabilitation issues [4]. According to studies, robot-assisted treatment is less expensive than standard intense arm training with comparable effects. Therefore, robots are a significant aspect of the current therapeutic paradigm for improving the overall quality of neurologic rehabilitation [5].

For more information about neurorehabilitation, visit:

- Efficacy of technology-assisted gait rehabilitation in Parkinson’s disease

- Efficacy of placebo in managing pain for neurological disorders

- Neurorehabilitation in dystonia – a holistic approach

We kindly invite you to browse our Interview category: https://efnr.org/category/interviews/. You will find informative discussions with renowned specialists in the field of neurorehabilitation.

References

- Kuo CY, Liu CW, Lai CH, Kang JH, et al. Prediction of robotic neurorehabilitation functional ambulatory outcome in patients with neurological disorders. Journal of NeuroEngineering and Rehabilitation. 2021. doi:10.1186/s12984-021-00965-6

- Iandolo R, Marini F, Semprini M, Laffranchi M, et al. Perspectives and Challenges in Robotic Neurorehabilitation. Applied Sciences 2019. doi:10.3390/app9153183

- Chien WT, Chong Y, Tse MK, Chien CW, Cheng HC. Robot-assisted therapy for upper-limb rehabilitation in subacute stroke patients: A systematic review and meta-analysis. Brain and Behavior 10, 2020. doi:10.1002/brb3.1742

- Huang VS, Krakauer JW. Robotic neurorehabilitation: a computational motor learning perspective. Journal of NeuroEngineering and Rehabilitation 6, 2009. doi:10.1186/1743-0003-6-5

- Jakob I, Kollreider A, Germanotta M, Benetti F, et al. Robotic and Sensor Technology for Upper Limb Rehabilitation. Innovations Influencing Physical Medicine and Rehabilitation, 2018. doi:10.1016/j.pmrj.2018.07.011

- Rehmat N, Zuo J, Meng W, Liu Q, et al. Upper limb rehabilitation using robotic exoskeleton systems: A systematic review. International Journal of Intelligent Robotics and Applications 2, 2018, 283–295. doi:10.1007/s41315-018-0064-8

- Dehem S, Gilliaux M, Stoquart G, Detrembleur C, et al. Effectiveness of upper-limb robotic-assisted therapy in the early rehabilitation phase after stroke: A single-blind, randomised, controlled trial. Annals of Physical and Rehabilitation Medicine 62, 2019, 313-320. doi:10.1016/j.rehab.2019.04.002

[ARTICLE ] Brain–computer interface treatment for gait rehabilitation in stroke patients – Full Text

Posted by Kostas Pantremenos in Gait Rehabilitation - Foot Drop on October 29, 2023

The use of Brain–Computer Interfaces (BCI) as rehabilitation tools for chronically ill neurological patients has become more widespread. BCIs combined with other techniques allow the user to restore neurological function by inducing neuroplasticity through real-time detection of motor-imagery (MI) as patients perform therapy tasks. Twenty-five stroke patients with gait disability were recruited for this study. Participants performed 25 sessions with the MI-BCI and assessment visits to track functional changes during the therapy. The results of this study demonstrated a clinically significant increase in walking speed of 0.19 m/s, 95%CI [0.13–0.25], p < 0.001. Patients also reduced spasticity and improved their range of motion and muscle contraction. The BCI treatment was effective in promoting long-lasting functional improvements in the gait speed of chronic stroke survivors. Patients have more movements in the lower limb; therefore, they can walk better and safer. This functional improvement can be explained by improved neuroplasticity in the central nervous system.

1. Introduction

Stroke is one of the main causes of mortality and long-term disability worldwide. Functional deficit of the lower limb is the most common paresis after a stroke. Stroke patients rarely fully recover after months or even years of therapy and other treatment, leaving them with permanent impairment. Many of these patients never regain the ability to walk well enough to perform all their daily activities (Hesse et al., 2008; Mehrholz et al., 2017). Gait recovery is one of the major therapy goals in rehabilitation programs for stroke patients and many methods for gait analysis and rehabilitation have been developed (Mehrholz et al., 2017). Weakened muscle tone is another common challenge in motor rehabilitation. Therapies such as active foot drop exercises, electromechanically assisted therapy and treadmill therapy are usually limited to patients with mild or moderate impairment (Mills et al., 2011; Mehrholz et al., 2017).

A 2018 study (Mehrholz et al., 2018) conducted a network meta-analysis based on 95 publications out of 44.567 that were considered. In this study, 4.458 patients were included, and the effectiveness of the most common interventions for gait rehabilitation after stroke was analyzed. The interventions where classified in five groups: (1) No walking training, (2) Conventional walking training (walking on the floor, preparatory exercises in a sitting position, balance training etc. without technical aids and without treadmill training or electromechanical-assisted training), (3) Treadmill training without or with body-weight support, (4) Treadmill training with or without a walking speed paradigm, (5) Electromechanical-assisted training with end-effector devices or exoskeletons. For the primary endpoint of walking speed, end-effector-assisted training (EGAIT_EE) achieved significantly greater improvements than conventional walking rehabilitation (mean difference [MD] = 0.16 m/s, 95% CI = [0.04, 0.28]). None of the other interventions improved walking speed significantly.

Functional electrical stimulation (FES) has also been used in motor rehabilitation therapy over the last few decades. Passive FES therapy can reduce muscle spasms and shorten the term of motor recovery (Hong et al., 2018). Passive therapies such as continuous passive motion or cycling therapy have been employed for patients and showed functional improvements in previous studies (Janssen et al., 2008; Yeh et al., 2010; Ambrosini et al., 2011). However, they do not include devices or systems to monitor the patient’s active engagement in the therapy.

Today, Brain-Computer Interfaces (BCIs) can provide an objective tool for measuring Motor Imagery (MI), creating new possibilities for “closed-loop” feedback (Wolpaw and Wolpaw, 2012). Closed-loop feedback depends on sensing the desired mental activity and is possible with MI-based BCIs, which could significantly improve rehabilitation therapy outcomes (Ortner et al., 2012; Cho et al., 2016; Cantillo-Negrete et al., 2018; Irimia et al., 2018).

MI-based BCIs have been employed in rehabilitation training for stroke patients to fill the gap between patients’ expectations and therapy outcomes. In conventional rehabilitation therapies, patients are often asked to try to move the paretic limb, or to imagine moving it, while a FES, physiotherapist and/or robotic device helps them to perform the desired movement. Their feedback is often provided when the users are not performing the required mental activity. There is no objective way to determine whether patients who cannot move are actively performing the desired motor imagery (MI) task and thus producing concordant neural activation. Its efficacy has been shown in multiple studies implementing exoskeleton, orthosis or robots which induce passive movement of their affected limbs (Ramos-Murguialday et al., 2013; Ono et al., 2014; Ang et al., 2015). During repetitive neurofeedback training sessions, even patients with severe impairment could complete the sensorimotor loop in their brains linking coherent sensory feedback with motor intention (Cho et al., 2016; Pichiorri et al., 2017; Irimia et al., 2018).

This concurrent sensory feedback with motor intention is an important factor for motor recovery (Ortner et al., 2012; Bolognini et al., 2016; Pichiorri et al., 2017; Cantillo-Negrete et al., 2018; Irimia et al., 2018). Concurrent feedback based on users’ intention may help them learn mental strategies associated with movement and BCI use, which can affect results (Neuper et al., 2005; Neuper and Allison, 2014). Neural networks are strengthened when the presynaptic and postsynaptic neurons are both active. In conventional therapies, when patients receive feedback while they are not performing MI, these two neuronal networks are not simultaneously firing. This dissociation between motor commands and sensory feedback may explain why the therapy does not significantly induce the reorganization of the patients’ brains around their lesioned area. Non-simultaneous, dissociated feedback cannot underlie the Hebbian learning between two neuronal populations that underlies the desired improvements from rehabilitation (Mayford et al., 2012; Wolpaw and Wolpaw, 2012). Thus, conventional therapies may sometimes fail because they rely on open-loop feedback.

This clinical trial investigated the impact of combining BCI technology with MI and FES feedback for motor recovery of the lower limbs. The patients’ real-time sensory feedback depended on their movement intention. We explore the relationship between the proposed rehabilitation method and rehabilitation results, including changes in walking speed. Patients who use the training mode may have better motor outcomes, and these outcomes will be compared with those from patients who had EGAIT_EE therapy. […]

[ARTICLE] Can specific virtual reality combined with conventional rehabilitation improve poststroke hand motor function? A randomized clinical trial – Full Text

Posted by Kostas Pantremenos in Paretic Hand, REHABILITATION, Virtual reality rehabilitation on April 8, 2023

Abstract

Trial objective

To verify whether conventional rehabilitation combined with specific virtual reality is more effective than conventional therapy alone in restoring hand motor function and muscle tone after stroke.

Trial design

This prospective single-blind randomized controlled trial compared conventional rehabilitation based on physiotherapy and occupational therapy (control group) with the combination of conventional rehabilitation and specific virtual reality technology (experimental group). Participants were allocated to these groups in a ratio of 1:1. The conventional rehabilitation therapists were blinded to the study, but neither the participants nor the therapist who applied the virtual reality–based therapy could be blinded to the intervention.

Participants

Forty-six patients (43 of whom completed the intervention period and follow-up evaluation) were recruited from the Neurology and Rehabilitation units of the Hospital General Universitario of Talavera de la Reina, Spain.

Intervention

Each participant completed 15 treatment sessions lasting 150 min/session; the sessions took place five consecutive days/week over the course of three weeks. The experimental group received conventional upper-limb strength and motor training (100 min/session) combined with specific virtual reality technology devices (50 min/session); the control group received only conventional training (150 min/session).

Results

As measured by the Ashworth Scale, a decrease in wrist muscle tone was observed in both groups (control and experimental), with a notably larger decrease in the experimental group (baseline mean/postintervention mean: 1.22/0.39; difference between baseline and follow-up: 0.78; 95% confidence interval: 0.38–1.18; effect size = 0.206). Fugl-Meyer Assessment scores were observed to increase in both groups, with a notably larger increase in the experimental group (total motor function: effect size = 0.300; mean: − 35.5; 95% confidence interval: − 38.9 to − 32.0; wrist: effect size = 0.290; mean: − 5.6; 95% confidence interval: − 6.4 to − 4.8; hand: effect size = 0.299; mean: − -8.9; 95% confidence interval: − 10.1 to − 7.6). On the Action Research Arm Test, the experimental group quadrupled its score after the combined intervention (effect size = 0.321; mean: − 32.8; 95% confidence interval: − 40.1 to − 25.5).

Conclusion

The outcomes of the study suggest that conventional rehabilitation combined with a specific virtual reality technology system can be more effective than conventional programs alone in improving hand motor function and voluntary movement and in normalizing muscle tone in subacute stroke patients. With combined treatment, hand and wrist functionality and motion increase; resistance to movement (spasticity) decreases and remains at a reduced level.[…]

[WEB] Rehabilitation specialist partners with innovative VR technology company to explore new era of assistive technology on stroke patients

Posted by Kostas Pantremenos in Assistive Technology, Neuroplasticity, REHABILITATION, Virtual reality rehabilitation on March 6, 2023

A specialist rehabilitation provider based in Cambridgeshire has recently partnered with an innovative Virtual Reality (VR) technology company to trial its latest products on those recovering from strokes – which are designed to create immersive and engaging neurorehabilitation.

Neuromersiv, which is a brand that looks to enable therapists and medical professionals to make neurorehabilitation therapy more diverse and engaging through utilising the increasing capabilities of VR, has partnered with Askham Village Community.

With a focus on individuals recovering from stroke, Askham Rehab will be looking to trial the new technology over a six-month period to explore its ability in facilitating functional movements and training for its patients, providing a unique opportunity that will also assist with research and inform the further development of the software.

Neuromersiv’s upper-limb therapy system ‘Ulysses’ is currently the only neuro rehab VR system that uses functional tasks performed in virtual daily living environments to enable repetitions for neuroplasticity. Askham will have the priority focus on the simulation of functional tasks including personal, kitchen use and bathroom use, with the ultimate goal of re-establishing independent living.

Neuromersiv, co-founded by Anshul Dayal and Oliver Morton-Evans, is an Australian-based company that has brought together a group of leading subject matter experts as advisors to provide their wealth of knowledge, skills and support for the next generation in neurorehabilitation.

Ulysses has recently been registered as a class 1 software medical device with the UK’s Medicines and Healthcare products Regulatory Agency, it has also undergone a phase 1 clinical trial in Australia, where it helped in delivering promising clinical outcomes.

The technology could unlock a depth of opportunity to help transition stroke survivors back to living independently.

Sara Neaves, Clinical Lead and Outpatients Service Manager at Askham Rehab, says: “We are delighted to be partnering with Neuromersiv for this six-month trial and to see how it impacts the recovery of our patients. For someone who has suffered a life-changing stroke, regaining independence is so important and to think that assistive technology in the form of VR can help accelerate this, is something we just had to be part of. At Askham, we always try to remain at the sharp end of innovation and when the opportunity to be the first trialists in the UK presented itself, we were very keen to get involved.”

Askham Village is a family-run community with a rehabilitation service that has transformed the lives of many individuals, and its remote setting is the perfect place to approach any personal challenge, big or small. With the latest robotic equipment and state-of-the-art gym and hydrotherapy equipment, it is leading the way in the local area for rehabilitation services and this recent venture highlights its forward thinking approach.

Anshul Dayal of Neuromersiv, also commented on the partnership, saying: “We are really excited to be partnering with a progressive organisation like Askham to trial our VR technology. The partnership will not only provide us with the opportunity to gain insights on our product from clinicians working on the forefront of improving lives, but also provide us with a springboard for our broader commercialisation strategy in the United Kingdom and Europe.”

Neaves concludes: “In the world of rehabilitation, innovation is paramount and to see the capabilities of technology develop over the years is a testament to the creative minds in the industry. Neuromersiv is looking to use its expertise for the betterment of neurorehabilitation patients, something we also pride ourselves on at Askham, making this the perfect meeting of minds.”

[ARTICLE] Upper Limb Function Recovery by Combined Repetitive Transcranial Magnetic Stimulation and Occupational Therapy in Patients with Chronic Stroke According to Paralysis Severity – Full Text

Posted by Kostas Pantremenos in Paretic Hand, tDCS/rTMS on February 12, 2023

Abstract

Repetitive transcranial magnetic stimulation (rTMS) with intensive occupational therapy improves upper limb motor paralysis and activities of daily living after stroke; however, the degree of improvement according to paralysis severity remains unverified. Target activities of daily living using upper limb functions can be established by predicting the amount of change after treatment for each paralysis severity level to further aid practice planning. We estimated post-treatment score changes for each severity level of motor paralysis (no, poor, limited, notable, and full), stratified according to Action Research Arm Test (ARAT) scores before combined rTMS and intensive occupational therapy. Motor paralysis severity was the fixed factor for the analysis of covariance; the delta (post-pre) of the scores was the dependent variable. Ordinal logistic regression analysis was used to compare changes in ARAT subscores according to paralysis severity before treatment. We implemented a longitudinal, prospective, interventional, uncontrolled, and multicenter cohort design and analyzed a dataset of 907 patients with stroke hemiplegia. The largest treatment-related changes were observed in the Limited recovery group for upper limb motor paralysis and the Full recovery group for quality-of-life activities using the paralyzed upper limb. These results will help predict treatment effects and determine exercises and goal movements for occupational therapy after rTMS.

1. Introduction

Motor paralysis after stroke limits patients’ activities of daily living (ADL) and reduces their quality of life [1,2]. Recently, noninvasive brain stimulation therapy has been developed to improve patients’ motor paralysis and ADL, and its effectiveness has been demonstrated [3,4]. The treatment of upper limb motor paralysis involves modulation of interhemispheric inhibition and induction of neuroplasticity in the cerebrum. A novel intervention using repetitive transcranial magnetic stimulation (rTMS) in combination with intensive occupational therapy (NEURO) has recently been developed [5]. In patients with stroke hemiplegia, high-frequency rTMS has been applied to the hemisphere ipsilateral to the paralysis to increase excitability [6], and low-frequency rTMS has been applied to the contralateral hemisphere to decrease interhemispheric inhibitory connections [7,8] with the damaged cortex [9]; thus, both high-frequency rTMS and low-frequency rTMS have been applied [10]. Repetitive currents are induced in the brain cortex to produce long-term changes in cortical excitability. In acute patients, high-frequency (10 Hz) rTMS applied to the impaired motor cortex activates it, improving paralysis [11,12]. In occupational therapy after rTMS, the patients in whom the activation of the interhemispheric inhibitory motor cortex has been adjusted are prescribed repetitive joint movements. The aim is to promote use-dependent plasticity in the brain and to subsequently restore motor paralysis and improve ADL [13]. NEURO is an effective treatment for improving upper limb dysfunction and impairments in ADL in chronic stroke patients 6 months after stroke onset. Its therapeutic effect has been shown to be unaffected by stroke type (cerebral hemorrhage or cerebral infarction) [14].

The goal of NEURO is to improve the quality of movement of the patient’s paralyzed upper limb by allowing it to be used in ADL. Since the effectiveness of NEURO depends on the severity of motor paralysis, therapists determine the exercises and target movements based on the patient’s pre-treatment upper limb function assessment score. The Fugl–Meyer Assessment of the Upper Extremity (FMAUE) and the Action Research Arm Test (ARAT) are used to assess upper limb motor function outcomes in NEURO [15]. These evaluation methods have been shown to have high accuracy and clinical usefulness. A previous study has been conducted to estimate post-treatment scores from the pre-NEURO FMAUE score [16]. The ARAT is a functional upper limb assessment tool used in patients with post-stroke hemiplegia and is characterized by its ability to reflect the patient’s activity [17]. Since the ARAT consists of object manipulation and reaching tasks, the occupational therapist (OT) plans exercises by estimating the ADLs in which the patient can use their hands based on the obtained assessment results. As the ARAT score correlates with the Motor Activity Log, which investigates the use of the paralyzed limb in ADLs, OTs helping patients improve their activity limitations can use it as a reference value for exercises and goal-setting [18,19]. Therefore, it can be inferred that predicting treatment effects with ARAT is more advantageous than using FMAUE in setting treatment goals and planning effective ADL exercises for patients. If ARAT scores are found to improve with NEURO, it will be easier for OTs to pre-determine the content of ADL exercises and develop achievable ADL goals.

Patients with mild-to-moderate motor paralysis with FMAUE scores ≥43 have higher interhemispheric inhibition from the healthy hemisphere to the affected hemisphere. It is predicted that the therapeutic effect of upper limb practice in the presence of rTMS-induced changes in synaptic transmission efficiency is dependent on motor paralysis severity [20]. If the post-treatment effects according to motor paralysis severity can be predicted using pre-treatment ARAT scores, the target movements for patients could be set with high accuracy. Recently, a treatment method using a brain-computer interface (BCI) was developed for the rehabilitation of stroke patients, and its effectiveness has been reported [21,22]. Even for new intervention methods, it is better to formulate exercises adapted to the severity of paralysis and recovery. Therefore, the results obtained in this study can be used as data to plan the most appropriate practice for patients in terms of future new intervention methods. As a result, this study aimed to estimate the amount of change in ARAT scores for each level of motor paralysis severity, classified according to the ARAT score before NEURO. […]

[BLOG POST] Robot-assisted neurocognitive rehabilitation of the hand

Posted by Kostas Pantremenos in Cognitive Rehabilitation, Paretic Hand, Rehabilitation robotics on February 12, 2023

Authors: Irina Benedek, Oana Vanta

1 Innovations in neurorehabilitation of the upper limbs

2 A multimodal approach to robot-assisted neurocognitive rehabilitation of the hand

4 The roles of robotic devices in improving upper extremity function

5 Results, discussions, and future perspectives

6 Conclusion on robot-assisted neurocognitive rehabilitation of the hand

Innovations in neurorehabilitation of the upper limbs

Is robot-assisted neurocognitive rehabilitation of the hand effective? Stroke is the leading cause of mortality and disability worldwide, negatively impacting the overall quality of life of patients. Most stroke survivors suffer from different types of motor impairments, despite the progressions in the field. Since neurorehabilitation of the upper limbs remains challenging, robotic-assisted therapies have been studied and developed in recent years. These methods were established as safe and practical therapies were used additionally to neurorehabilitation programs [1]. More precisely, in the last two decades, robotic devices that focused on training the proximal upper extremity were assessed, showing results similar to dose-matched conventional therapies [2].

For more information on upper limb neurorehabilitation, visit:

- The feasibility of repetitive sensory stimulation in the rehabilitation of the upper limb after a stroke (the PULSE-I study)

- Wearable elbow robot in rehabilitation after a stroke

- Recovery of precise hand movements after stroke

A multimodal approach to robot-assisted neurocognitive rehabilitation of the hand

Distal arm sensorimotor function is essential for improving the quality of life after a stroke. Nevertheless, the distal arm function is crucial for the ability to perform daily activities and is usually severely affected after a cerebrovascular accident when there is a low probability of regaining its full function [3]. However, scientific data demonstrates the possibility of recovery with intensive motor training provided by robotic-assisted devices [4, 5]. Until now, the focus has been on movement practice without implementing a therapeutic paradigm adapted to the capabilities of the technology in question.

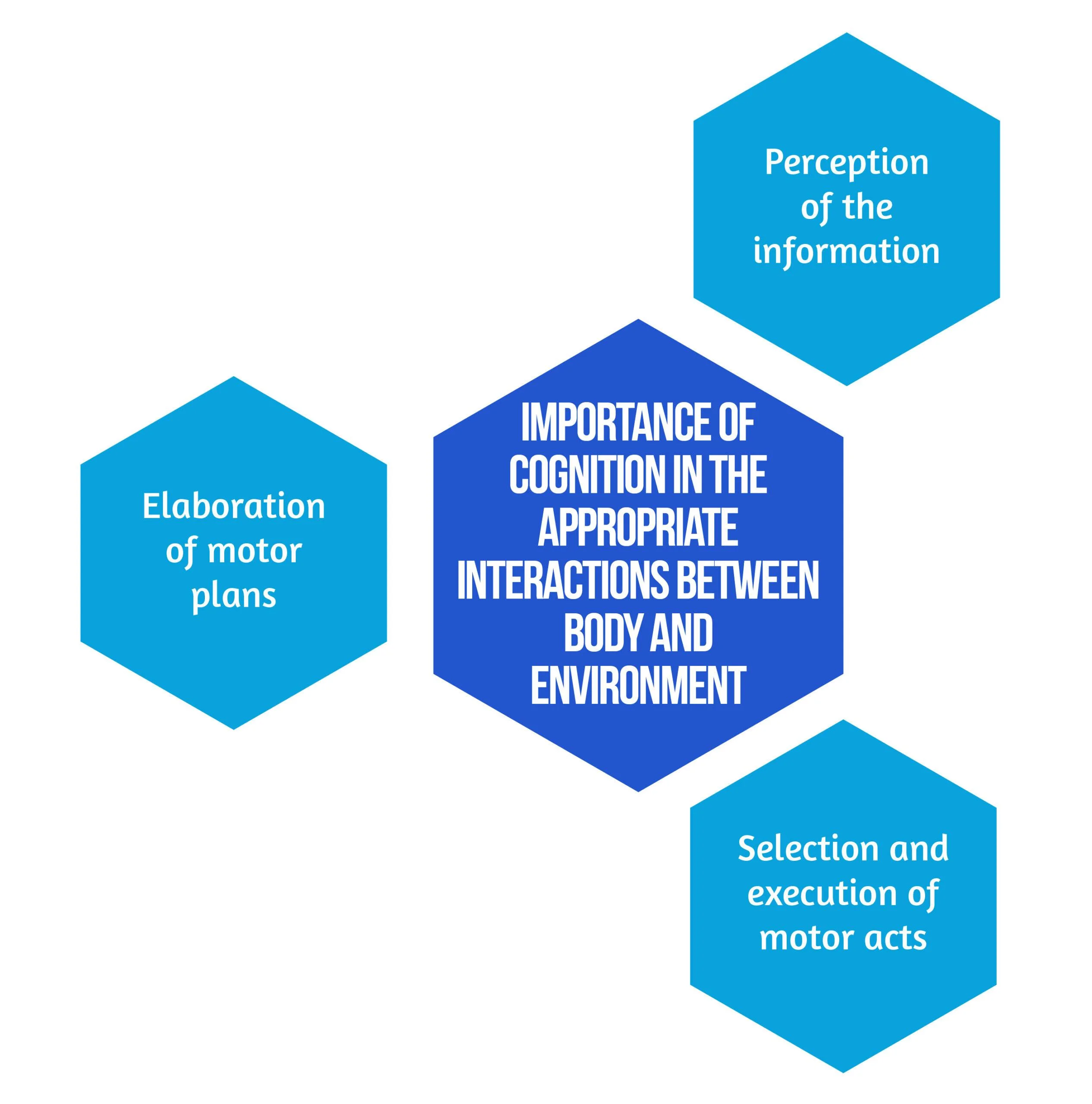

In the study by Ranzani et al. [6] from 2020, the goal was to investigate sensorimotor robotic-assisted rehabilitation of hand function and cognition training in patients with subacute stroke. The researchers based their comprehensive approach on the fact that cognition is essential for appropriate interactions between body and environment, such as [6]:

Figure 1. Importance of Cognition

Secondary objectives derived from the hypothesis are that neurocognitive robotic-assisted hand recovery would also improve motor, sensory, and cognitive functions in this category of patients. This method is particularly relevant for hand rehabilitation due to the importance of the cognitive processing of sensory information. Furthermore, combining multimodal inputs requires the involvement of associative cortices that are essential for learning and, consequently, neuronal plasticity and recovery [7]. Even though few studies have compared neurocognitive therapies to other rehabilitation treatments [8, 9], preliminary evidence suggested promising results in the following:

- Enhancing upper limb function

- Improving the ability to conduct daily tasks

- The overall quality of life [8,9].

Inspired by the neurocognitive approach, the authors [6] implemented this concept on a robotic-assisted device focused on exercises including grasping and pronation-supination. Virtual objects were produced by the robot, both visually and haptically, simulating the tangible materials used in traditional recovery [6].

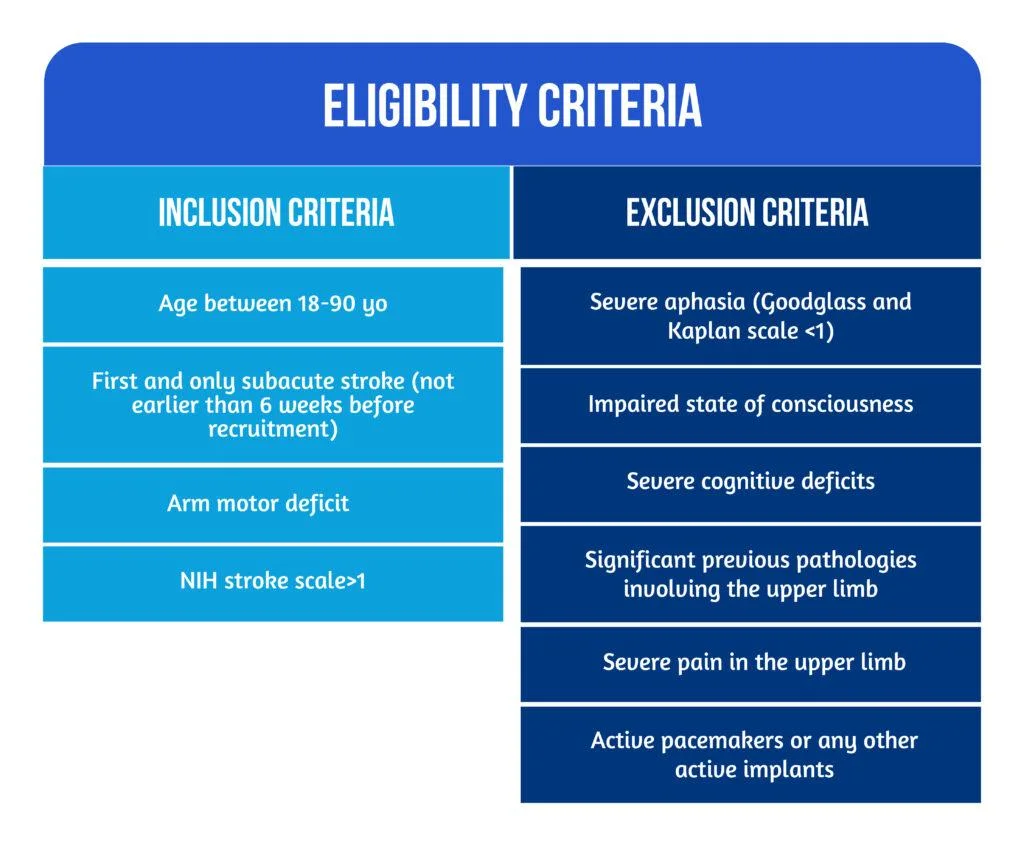

Study design

The study conducted by Ranzani et al. [6] was a randomized control trial that took place in Switzerland. Patients were randomly assigned into two groups: the robot-assisted group (RG), which benefited from neurocognitive therapy with “ReHapticKnob” (Fig. 2), and the control group (CG), which received dose-matched classical neurocognitive therapy. With the main focus on hand function, all participants had three neurocognitive therapy sessions per day over four weeks. Each session lasted 45 minutes, and in the RG, one session was replaced by robot-assisted therapy. The subjects were under the careful supervision of a specialized therapist who adapted the intensity and difficulty level of the exercises according to every patient. The type and number of exercises performed in the RG group were also assessed to match the therapy type and dose performed in conventional recovery programs [6].

Figure 2. Picture of a subject with stroke, using the rehabilitation robot ( available from [6])Between April 2013 and March 2017, 33 patients who suffered a subacute stroke were evaluated and included in the study. Seventeen subjects were randomized in the RG, while 16 were allocated to the CG. 27 patients benefited from the allocated therapy and completed the T1 assessment, while 23 completed the entire protocol. Six patients withdrew before the T1 evaluation due to medical reasons or lack of motivation. No adverse events related to the interventions were noted [6]. The eligibility criteria are described in Figure 3 below:

Figure 3. Patient Eligibility

The roles of robotic devices in improving upper extremity function

As mentioned above, the neurocognitive method proposed in this study implied both sensorimotor and cognitive components, which are equally important during the performance of complicated tasks and daily activities. Patients were instructed to explore different objects, such as sponges, sticks, and strings, to discern their properties by using haptic and postural senses. A robotic device is an ideal therapeutic instrument for performing such tasks because it can render a complex range of stimuli in a repeatable, well-controlled manner [10].

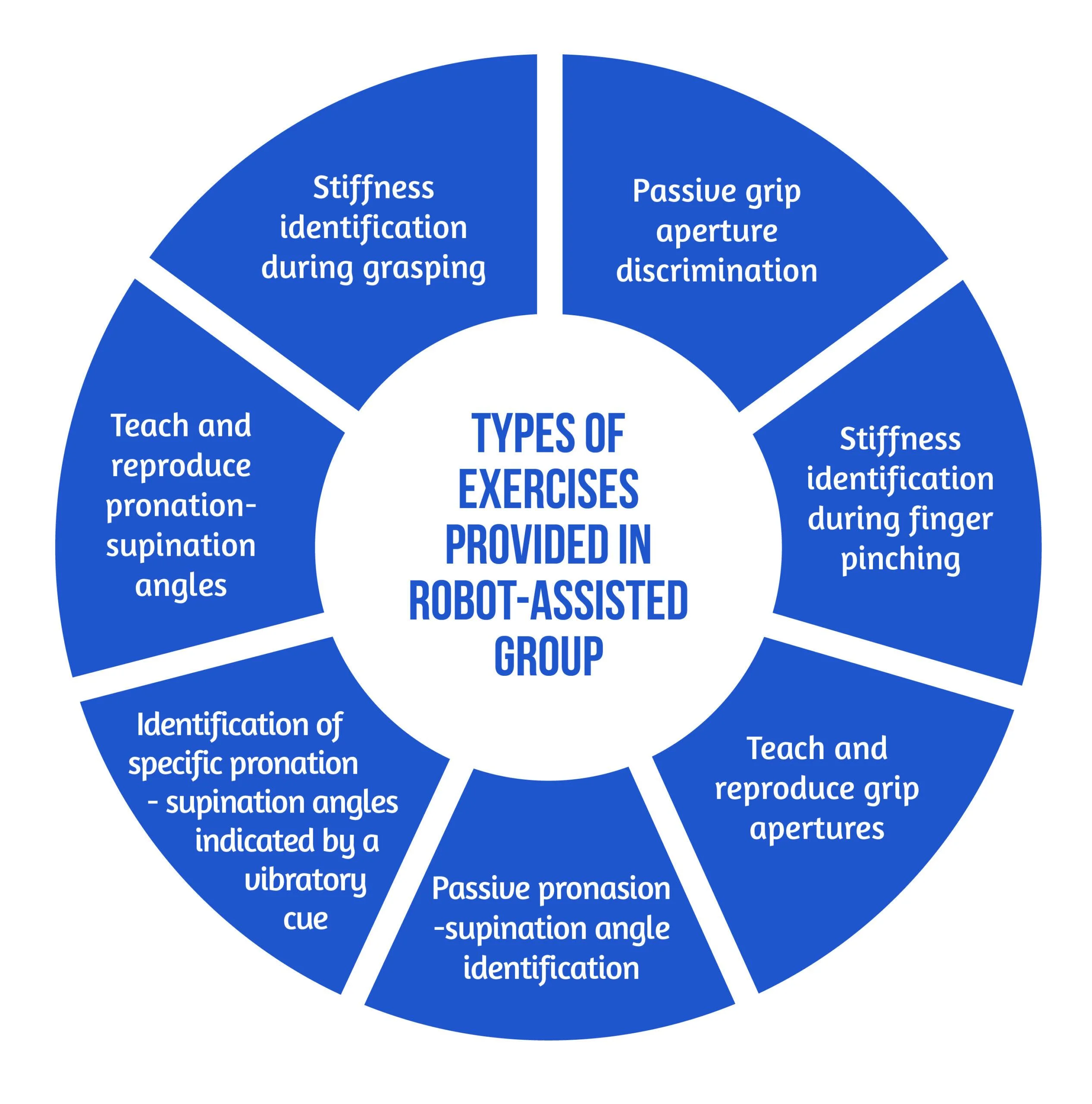

The neurorehabilitation program provided in the RG group consisted of seven types of exercises [6], as showcased in Figure 4 below:

Figure 4. Types of Exercises

The motor aspects of the intervention included symmetric thumb and finger flexion/extension and forearm pronation/supination, executed separately or combined. The sensory aspects included encoding the following types of somatosensory signals without visual information: sponge/spring stiffness, object shape and size, arm positioning, and vibratory cues. The cognitive element of the training, executed passively (with guidance from the robot or therapist) or actively (by the subject), required the elaboration/recognition of perceptual information (object length, stiffness) and encoding/decoding of this information in working memory for comparison purposes of more than one item, along with planning and executing the specific motor plans [6].

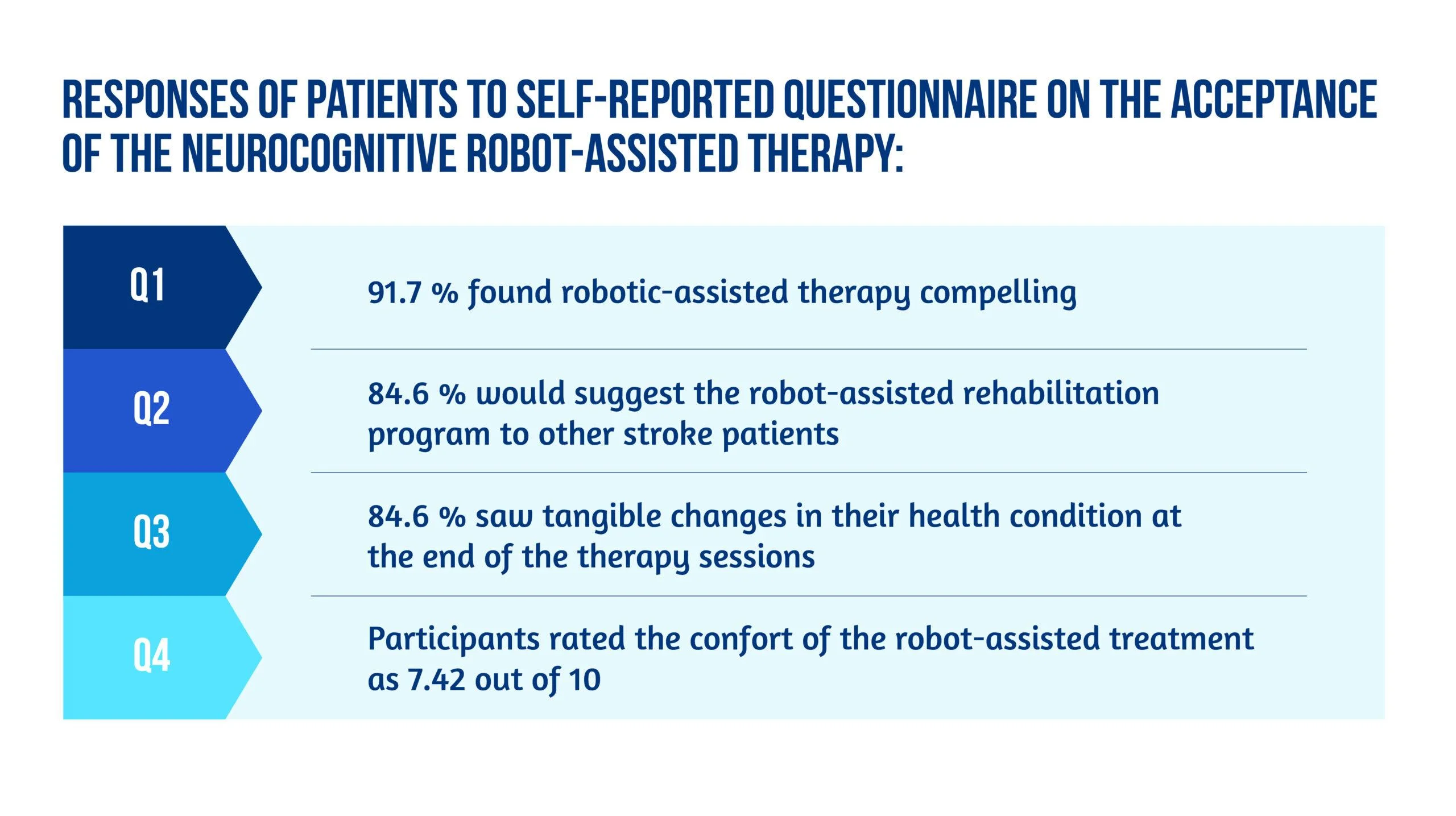

The study’s primary outcome was evaluating the changes in upper limb extremity motor impairment from baseline to the end of treatment (T0-T1). The parameter used to quantify the results was the Fugl-Meyer Assessment of the Upper Limb Extremity scale (FMA-UE). Different motor, sensory and cognitive scales were also used for the secondary outcomes. The average number of task repetitions and therapy intensity were assessed to compare the two study groups in terms of dose matching. For the acceptance of the neurocognitive robotic-assisted program, a 4-item questionnaire was developed. The two study groups were evaluated and compared for all outcome measures after the intervention (T-T0) and at the regular follow-ups (T2-T0 and T3-T0) [6].

Results, discussions, and future perspectives

According to the equivalence analysis, the change in FMA-UE in the robotic-assisted group may be regarded as non-inferior to the control group. Both groups showed improvements in all secondary clinical scores after 4 weeks of therapy (T1). In addition to motor impairments, sensory and cognitive deficits were also improved in both groups after 4 weeks of treatment (T1) [6]. However, by the end of the study, subjects randomized in the RG group tended to show better results. At T2 and T3, the changes in FMA-UE were also sustained. When comparing changes in clinical measures over time, the two groups did not demonstrate any significant between-group differences [6].

According to the supervising therapist, the RG did an average of 71.49 task repetitions during a treatment session, compared to 73.47 in the Control Group. There was no statistically significant difference in therapy intensity between the two groups when comparing robot-assisted and traditional therapy sessions [6].

Regarding neurocognitive therapy sessions, the average daily quantity of occupational therapy and/or lower limb kinesiotherapy did not differ statistically between the groups.

The acceptance of the neurocognitive robot-assisted therapy was evaluated by using a self-reported questionnaire answered by 12 patients [6], as showcased in Figure 5:

Figure 5. Patient responses

Unlike other robot-assisted rehabilitation studies that focus primarily on locomotor training, this method fully utilized the robot’s haptic rendering capabilities and suggested a therapy regimen tailored to this potential [6].

Ranzani and the team were able to demonstrate that this strategy was well-liked and recommended by the majority of patients. It could also be included in the patient’s daily routines in the subacute stage of stroke recovery. Although the questionnaire pointed out slight pain in finger fixation and difficulty levels were sometimes evaluated as excessively high in three out of seven exercises [6], most subjects considered the program encouraging and pleasant, and they noticed tangible benefits to their health after completing it.

The findings of the equivalency test assessing the evolution in the FMA-UE show that motor recovery in the RG was not inferior to the CG for the specific intervention. Usually, little to no difference between traditional and robotic-assisted therapies should be expected since the dosage and therapeutic movements are meant to be identical between the study groups. Furthermore, the idea that a traditional treatment session might be replaced without compromising the overall rehab programs opens up new possibilities for robot-assisted therapy development [6].

Conclusion on robot-assisted neurocognitive rehabilitation of the hand

This research reported the findings of a randomized controlled trial that aimed to compare robotic-assisted recovery to conventional therapy for patients with a subacute stroke and impairment of hand function. Although the study included a limited number of participants, the results showed that robot-assisted treatments might be successfully incorporated into clinical neurorehabilitation routines [6]. In other words, this trial is also an indicator of how low data can become significant data. Compared to conventional dose-matched neurocognitive programs, the results demonstrated that this innovative approach was at least as practical as the conventional one.

Early exposure of stroke patients to using such patient-tailored robot-assisted therapy programs opens the door to using such technology in the clinic with little therapist supervision or at home after hospital release to help enhance the dose of hand therapy for stroke patients [6].

Bibliography

- Veerbeek JM, Langbroek-Amersfoort AC, van Wegen EE, Meskers CG, Kwakkel G. Effects of robot-assisted therapy for the upper limb after stroke: a systematic review and meta-analysis. Neurorehabil Neural Repair. 2017; 31(2):107–21. doi: 10.1177/1545968316666957

- Maciejasz P, Eschweiler J, Gerlach-Hahn K, Jansen-Troy A, Leonhardt S. A survey on robotic devices for upper limb rehabilitation. J Neuroeng Rehabil. 2014;11(1):3. DOI: 10.1186/1743-0003-11-3

- Fischer HC, Stubblefield K, Kline T, Luo X, Kenyon RV, Kamper DG. Hand rehabilitation following stroke: a pilot study of assisted finger extension training in a virtual environment. Top Stroke Rehabil. 2007;14(1):1–12. DOI: 10.1310/tsr1401-1

- Lambercy O, Dovat L, Yun H, Wee SK et al. Effects of a robot-assisted training of grasp and pronation/supination in chronic stroke: a pilot study. J Neuroeng Rehabil. 2011;8(1):63. doi: 10.1186/1743-0003-8-63.

- Hsieh YW, Lin KC, Wu CY, Shih TY, Li MW, Chen CL. Comparison of proximal versus distal upper-limb robotic rehabilitation on motor performance after stroke: a cluster controlled trial. Sci Rep. 2018;8(1):2091. doi: 10.1038/s41598-018-20330-3

- Ranzani R, Lambercy O, Metzger JC, Califfi A et al. Neurocognitive robot-assisted rehabilitation of hand function: a randomized control trial on motor recovery in subacute stroke. J Neuroeng Rehabil. 2020 Aug 24;17(1):115. doi: 10.1186/s12984-020-00746-7.

- Van de Winckel A, Wenderoth N, De Weerdt W, Sunaert S et al. Frontoparietal involvement in passively guided shape and length discrimination: a comparison between subcortical stroke patients and healthy controls. Exp Brain Res Springer. 2012;220(2):179–89. doi: 10.1007/s00221-012-3128-2

- Sallés L, Martín-Casas P, Gironès X, Durà MJ et al. A neurocognitive approach for recovering upper extremity movement following subacute stroke: a randomized controlled pilot study. J Phys Ther Sci. 2017;29(4):665–72. doi: 10.1589/jpts.29.665

- Lee S, Bae S, Jeon D, Kim KY. The effects of cognitive exercise therapy on chronic stroke patients’ upper limb functions, activities of daily living and quality of life. J Phys Ther Sci. 2015;27(9):2787–91. doi: 10.1589/jpts.27.2787

- Metzger J-C, Lambercy O, Califfi A, Dinacci D et al. Assessment-driven selection and adaptation of exercise difficulty in robotassisted therapy: a pilot study with a hand rehabilitation robot. J Neuroeng Rehabil. 2014;11(1):154. Available at: https://jneuroengrehab.biomedcentral.com/articles/10.1186/1743-0003-11-154

[Abstract] Vagus Nerve Stimulation (VNS) Paired With Upper Extremity Rehabilitation In Chronic Stroke: Improvements In Wrist And Hand Impairment And Function

Posted by Kostas Pantremenos in Paretic Hand, REHABILITATION on February 11, 2023

Abstract

Introduction: In the VNS-REHAB trial, vagus nerve stimulation (VNS) paired with task-specific arm and hand rehabilitation (Paired VNS) led to clinically meaningful improvements in both impairment and function of the upper extremity in people with chronic ischemic stroke. In this post hoc analysis of trial data, we assessed whether improvements were driven by proximal (shoulder and elbow) and/or distal (wrist and hand) components of the Upper Extremity Fugl-Meyer Assessment (FMA-UE). We also wanted to determine whether FMA-UE improvements correlated with improvements in functional outcome.

Methods: Chronic stroke participants (n=108) with moderate-severe UE impairment (FMA-UE 35.1±8) were implanted with the VNS device and randomized to Paired VNS (n=53) or Controls (n=55). Participants underwent 6 weeks of in-clinic therapy followed by 90 days of a home exercise program. Outcomes, including the FMA-UE and Wolf Motor Function Test (WMFT), were collected after in-clinic therapy (Post-1) and 90 days of home therapy (Post-90).

Results: Distal FMA-UE change was significantly greater after Paired VNS compared to Controls, both at Post-1 (2.43±2.52 vs. 0.64±2.33; 95% CI 0.87-2.72, p<0.001, Cohen’s d=0.74) and Post-90 (2.58±2.88 vs. 0.93±3.16; 95% CI 0.5-2.81, p=0.005, Cohen’s d=0.55). Proximal FMA-UE change was also greater after Paired VNS compared to Controls but did not reach statistical significance at either timepoint. At Post-90, both proximal (r=0.50, p<0.0001) and distal (r=0.42, p=0.001) FMA-UE change significantly correlated with WMFT change after Paired VNS, but not in Controls (proximal: r=0.24, p=0.06; distal: r=0.14, p=0.24).

Conclusions: The data suggest that Paired VNS leads to greater improvement in the distal upper extremity compared to rehabilitation alone and that improvements in FMA-UE scores are correlated with improvements in function. These findings suggest that the improvements seen with Paired VNS are of functional importance. A greater understanding of region-specific arm and hand recovery after Paired VNS therapy will facilitate planning and implementation of a personalized neurorehabilitation approach based on patient-specific impairments.